Facebook to Read Your Thoughts Soon – Courtesy Augmented Reality

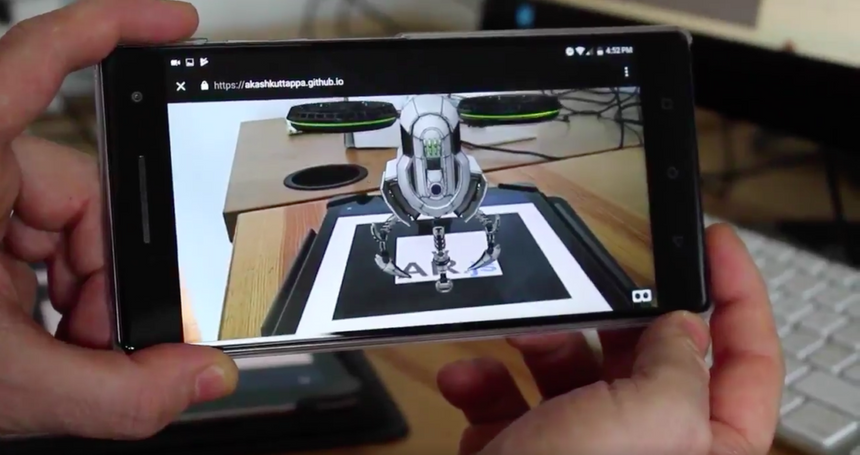

Recently, Facebook hinted on the progress of its brain-computer interface (BCI) program, which would use augmented reality to literally read the mind and decode messages into text, completely hands-free.

If you’ve ever tried to imagine how far the augmented reality technology can go in transforming how we live and engage in everyday activities, then it’s time to expand your mind some more. As more possibilities unfold, the limits and boundaries are pushed even further. Facebook’s exploration of BCI seeks to provide a solution to the question of input for all-day wearable Augmented reality glasses.

The first announcement about the brain-computer interface (BCI) program was made at F8 in 2017 when Facebook revealed it was working on 2 projects that are focused on silent speech communications. One of the two projects, Facebook explained, would allow people to type straight from their brains.

The update from Facebook on the project shows that it is still ongoing and something the company is fully invested in as it charts the course for the future of digital communication. As part of its move to actualise BCI, Facebook teamed up with some researchers at the University of California, San Francisco, who have been working on how to improve the lives of people suffering from paralysis using BCI.

For Facebook who is interested in transforming how we interact with technology and make it as seamless as possible, the common interest in BCI presented an opportunity to explore the possibility of decoding speech from brain activity in real-time.

In the research results published in Nature Communications, the researchers revealed the progress they’ve made in creating an algorithm with which they said could “decode a small set of full, spoken words and phrases from brain activity in real time.” They achieved this by working with epileptic patients undergoing surgery. Facebook also noted that more work is still going on to enable it to decode more vocabularies and reduce the rate of errors.

Project Steno, as it is called, is a project by Facebook’s Reality Labs, and they will continue to provide funding and engineering support to the UCSF research team until its final stage. The researchers are targetting “to reach a real-time decoding speed of 100 words per minute with a 1,000-word vocabulary and word error rate of less than 17%.”

Although their work involves the use of electrodes implanted into patients with speech loss, Facebook’s dream for this technology is that it would be achieved without involving any brain implant. It wants it to employ “non-invasive, wearable sensors that can be manufactured at scale.” Hence, the current research is to see how possible it is to restore one’s ability to communicate using brain activity and to lay the foundation on the algorithm to make this work.

Facebook also revealed that the results from the UCSF research team so far, even though their work involves implants, still exceed what they’ve been able to achieve using non-invasive methods. This means Facebook is exploring other options to achieve its goal.

One of such methods is the use of near-infrared light which will be able to detect shifts in oxygen levels within the brain and use this to indirectly measure brain activity. This, it is trying to achieve using “a portable, wearable device made from consumer-grade parts.”

In a statement, Facebook writes, “We don’t expect this system to solve the problem of input for AR anytime soon. It’s currently bulky, slow, and unreliable. But the potential is significant, so we believe it’s worthwhile to keep improving this state-of-the-art technology over time. And while measuring oxygenation may never allow us to decode imagined sentences, being able to recognize even a handful of imagined commands, like “home,” “select,” and “delete,” would provide entirely new ways of interacting with today’s VR systems — and tomorrow’s AR glasses.”

“We’re also exploring ways to move away from measuring blood oxygenation as the primary means of detecting brain activity and toward measuring movement of the blood vessels and even the neurons themselves. Thanks to the commercialization of optical technologies for smartphones and LiDAR, we think we can create small, convenient BCI devices that will let us measure neural signals closer to those we currently record with implanted electrodes — and maybe even decode silent speech one day,” the statement reads.

The Future of Computing

The future of computing is such that the human-computer interaction is made an integral part of people’s lives, erasing or reducing the consciousness of engaging in such activities especially through physical contact with devices. AR holds a critical position in helping to realize this future.

According to Facebook, “The promise of AR lies in its ability to seamlessly connect people to the world that surrounds them — and to each other. Rather than looking down at a phone screen or breaking out a laptop, we can maintain eye contact and retrieve useful information and context without ever missing a beat.”

How amazing that future promises to be! But before we get so excited at these possibilities, it could take the next decade to begin seeing these things unfold.

In its report on F8 2017 proceedings, Facebook wrote: “The set of technologies needed to reach full AR doesn’t exist yet. This is a decade-long investment and it will require major advances in material science, perception, graphics and many other areas. But once that’s achieved, AR has the potential to enhance almost every aspect of our lives, revolutionizing how we work, play and interact.”

While we dwell on the thoughts of this move now, it is only a matter of time before we hear of the next “big” thing people are trying to achieve with this technology.

References:

- https://newsroom.fb.com/news/2017/04/f8-2017-day-2/

- https://tech.fb.com/imagining-a-new-interface-hands-free-communication-without-saying-a-word/

- https://www.nature.com/articles/s41467-019-10994-4

- https://mashable.com/article/facebook-brain-reading-ar-glasses/?europe=true

- https://www.pcgamer.com/facebook-wants-to-read-your-thoughts-with-its-augmented-reality-glasses/